Tomàs Ortega

Tomàs Ortega

Research Software Engineer

Princeton Language and Intelligence

Princeton University

Originally, I am from Sant Cugat (near Barcelona) but I’ve lived in Madison, Pasadena, Irvine, and now in Philadelphia. I’m a Research Software Engineer with the Princeton Language and Intelligence group at Princeton University.

I completed my PhD in Electrical Engineering and Computer Science at UC Irvine, under the supervision of Hamid Jafarkhani. I have previously been at NASA’s JPL with Marc Sanchez-Net, at the Vector Institute with Xiaoxiao Li, and at Nokia Bell Labs (Murray Hill) with Sahil Tikale.

Before joining UCI, I graduated from Universitat Politècnica de Catalunya with a double degree in Mathematics and Electrical Engineering as part of the CFIS program, and a MS in Advanced Mathematics and Mathematical Engineering, focused on discrete mathematics and information theory.

For my undergrad thesis I worked with the Communications Architectures and Research Section at NASA’s Jet Propulsion Laboratory, helping build the next-generation space radios.

Research interests

My work bridges theory and practice by incorporating real-world network constraints into the analysis of collaborative systems. I’m broadly interested in optimization, information theory, and AI. My dissertation focused on distributed algorithms and privacy-preserving machine learning, particularly in the context of real-world communication networks. Recently, I have been interested in AI for theorem proving and formalized mathematics.

Some highlighted projects

Decentralized online learning without learning rates

Tuning learning rates is a major pain point in online learning. In the decentralized setting, this problem is worse since nodes have to coordinate between them and may have different local optimal learning rates.

In this work, we proposed a method to perform decentralized online learning without learning rates, and with sublinear network regret bounds. To achieve this, we extended the parameter-free framework from Francesco Orabona and Dávid Pál with a gossip consensus scheme. We also developed a new betting-function framework inspired by this work, which is more amenable for analysis, and which we believe is of independent interest.

We found that our analysis needed a linear gossip schedule (in learning round t, we perform t rounds of gossip) to achieve sublinear regret bounds, which is impractical. However, in practice, we can get away with a constant number of gossip rounds in all our experiments. We conjecture that the linear gossip schedule is an artifact of our analysis, and that a constant gossip schedule is sufficient, but proving this is an open problem.

The code for this project is available here.

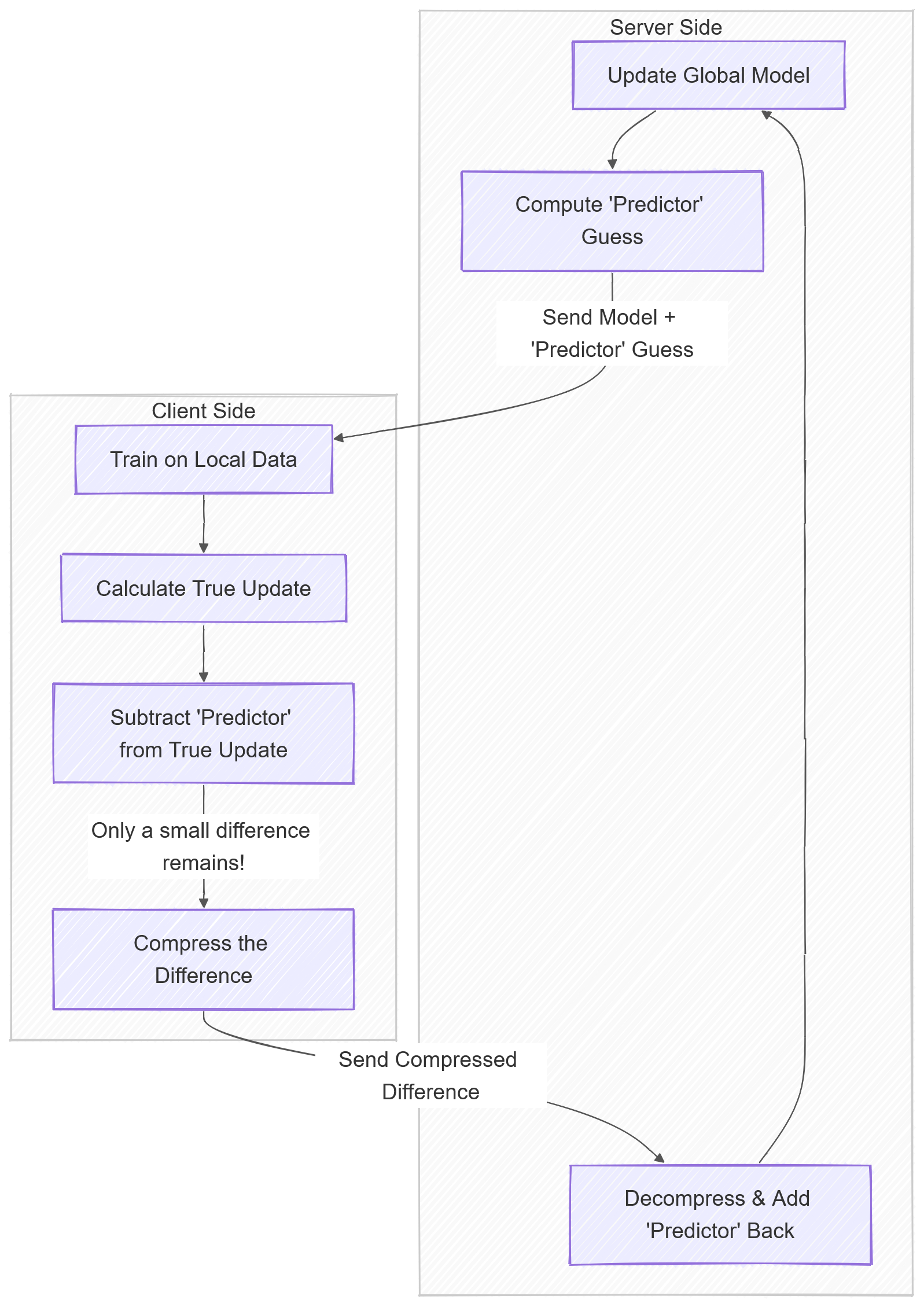

Privacy-preserving error feedback for distributed learning

Practical distributed learning often uses biased aggressive compression for communication from the clients to the server. However, to guarantee convergence, we need client-specific control variates to perform error feedback.

Individual control variates kill privacy guarantees, and do not scale with the number of clients. To fix error feedback, we proposed a framework that leverages previous aggregated client updates for feedback. This allows highly aggressive compression without the privacy and scale issues that come with client-specific control variates. The open-source code is available here.

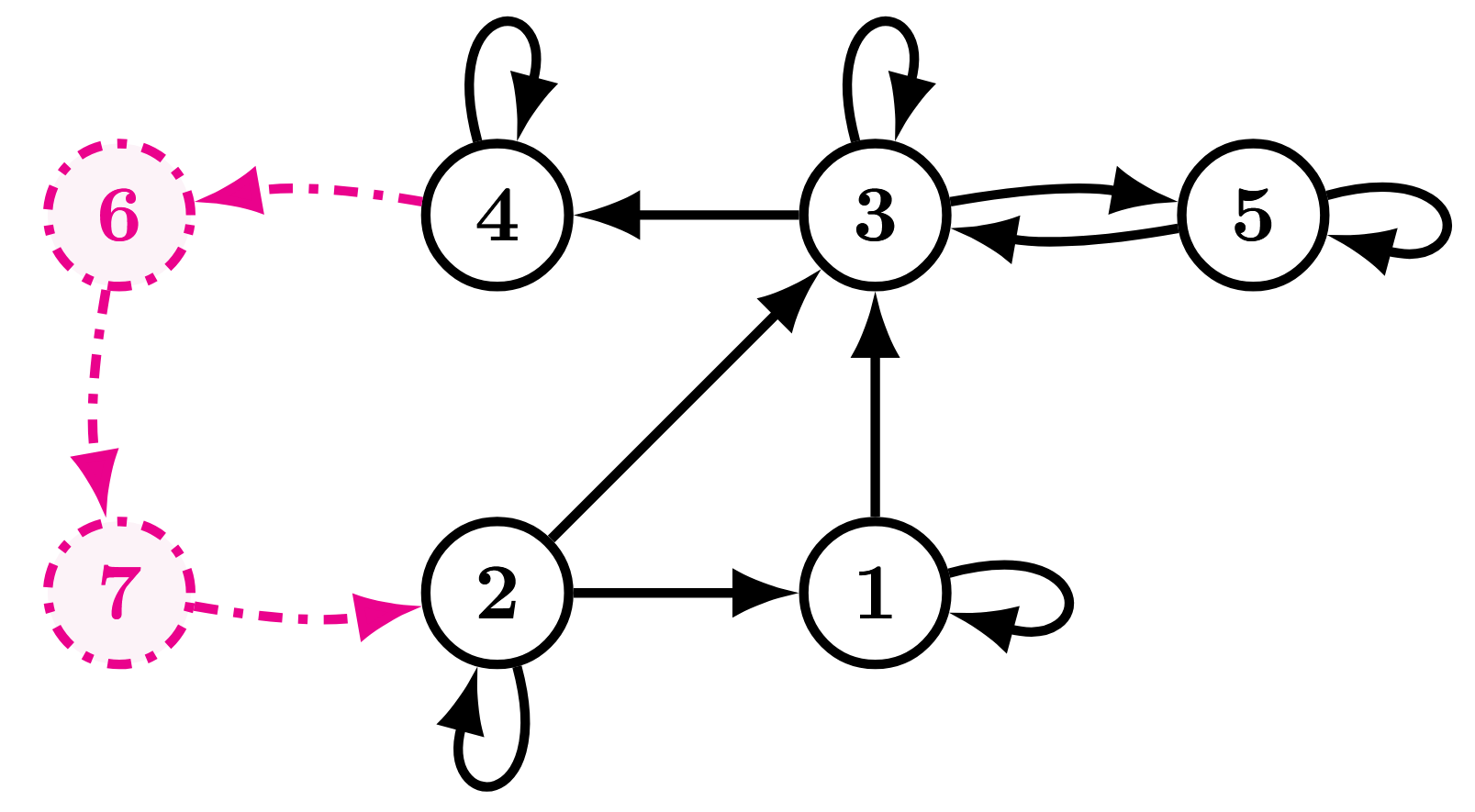

Truly decentralized learning on directed graphs

Decentralized optimization algorithms typically require communication between nodes to be bi-directional.

In the directed case, for non-convex losses, existing algorithms required nodes to know how many listeners they have (knowledge of their out-degree). We proposed a series of works that circumvent this requirement.

A nice property of this framework is that it naturally accomodates networks with delays, as one can add imaginary nodes to the network to model delays, and use the same analysis to obtain convergence guarantees.

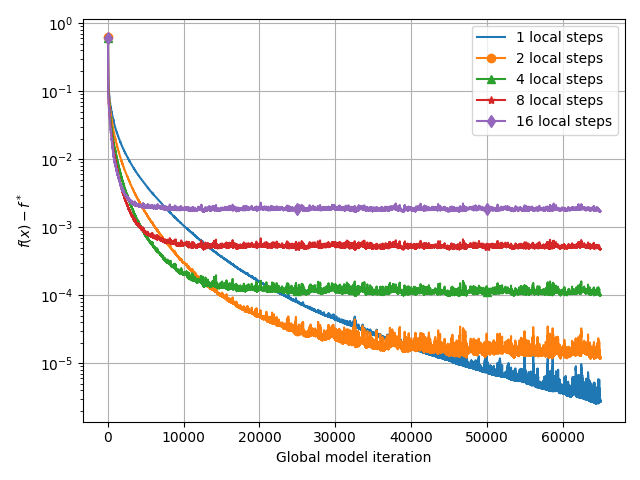

Asynchronous federated learning meets compression

In 2021, Meta announced FedBuff, a system for asynchronous federated learning with buffered aggregation. In plain words, the server waits for a certain number of client updates to arrive, and then performs an aggregation step (averaging over the clients in the buffer).

However, their analysis did not consider communication compression, which is a key component of practical federated learning systems. We proposed an algorithm that has a similar buffered asynchronous structure, but which also has a hidden-state feedback mechanism to allow for highly aggressive communication compression.

We proved that our algorithm behaves nicely with respect to the compression parameter. Namely, the asynchrony and compression error cross-terms are negligible in the convergence rate!

Our analysis revealed a bug in the original FedBuff convergence proof, which we fixed. Plus, we did not require the bounded gradient assumption that the original FedBuff paper made. Independently, Mohammad Taha Toghani and César A. Uribe had also proposed a fix for this proof, but they did not consider compression.

Proving stuff with Lean

On the side, I enjoy theorem proving with Lean. In the future I would like to formalize more of my proofs using Lean. I used to organize a group for people in the greater LA area to learn how to write mathematical proofs in Lean. I have contributed to Compfiles, Sphere-Packing, and OrderedSemigroups, among others.

Error correcting codes from Generalized Quadrangles

Together with Simeon Ball, we developed a method to construct point-line incidence matrices for Generalized Quadrangles in polynomial time (polynomial to the 4th, 6th and 11th power for different GQs but hey, still better than existing exponential methods).

This allowed us to construct the largest point-line GQ incidence matrix repository that currently exists. But, better than that, these point-line incidence matrices are quasi-cyclic! This is a really desireable property if you want to construct error correcting codes from GQs, which achieve a very good error rate to round of belief propagation decoding ratio. The repository also has .alist matrices to easily run LDPC simulations.

Maintaining communication while entering the Martian atmosphere

During the Entry, Descent and Landing (EDL) phase of rover missions to Mars, the communications are lost due to the large Doppler shift caused by the spacecraft dramatically decelerating when hitting the Martian atmosphere. During my time at JPL, we proposed a system to enable us to track this shift in Doppler and maintain comms throughout.

This system will be implemented in the next generation of NASA’s spacecraft radios.

As a part of this line of work, we derived an analytical approximation for Phase-Locked Loops frequency error standard deviation, which is of independent interest.

If you made it this far

Here is a Snake game I coded in Java.

Or, if you’re an NBA nerd, check out Hardwood Heuristics, where I try to see if some basketball tropes are actually backed up by data.